Version Pinning by Default

by Instill AIWe’ve removed all latest tags from Instill Core service images. Going forward, every service must reference a specific Git tag or commit hash — making dependencies more stable and traceable.

What’s new

- Services no longer use

latest— everything is now explicitly versioned - Better reproducibility across environments

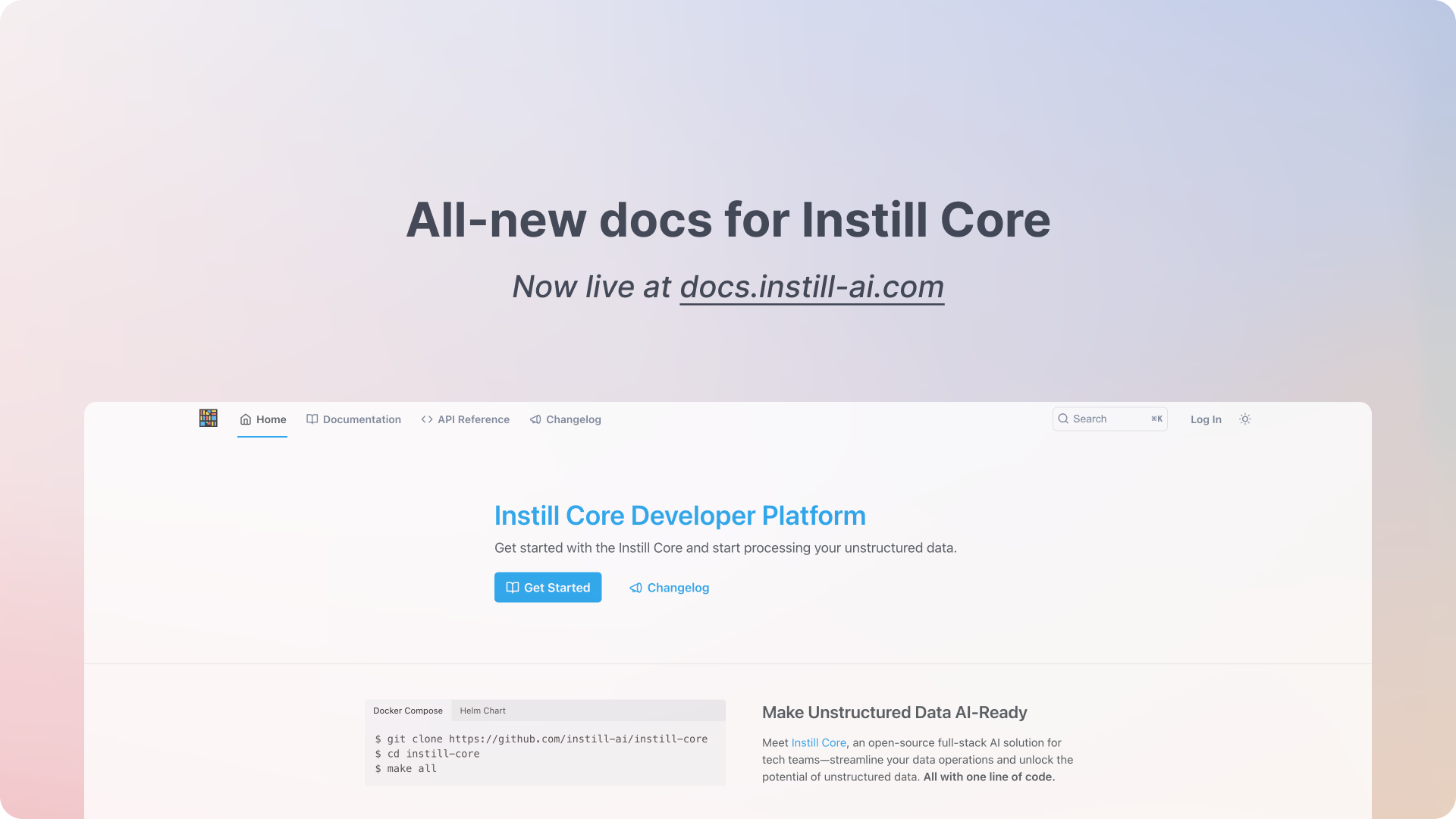

How to run Instill Core CE

- Stable version (recommended for most users):

git clone -b $VERSION https://github.com/instill-ai/instill-core.git && cd instill-core

make runReplace $VERSION with the latest release tag.

- Development version (for contributors and latest CE features):

git clone https://github.com/instill-ai/instill-core.git && cd instill-core

make compose-devPlease update your local setup or deployment scripts accordingly.